Private Thoughts Blog

Privacy is Power | AI Trust and Control | Tools Shared Freely

How Wearable Data Changes Your Behavior Without You Noticing

Wearables don’t just track—they influence. Scores become trusted signals that shape decisions, creating a loop: data → score → behavior → new data. Over time, users optimize for the system, narrowing choices and redefining “normal.” The system doesn’t control behavior—it shapes what feels like the right choice.

How Wearables Predict Your Health Before You Feel It

Wearables are shifting from tracking to predicting. By building baselines from sleep, heart rate, and activity, they detect subtle changes and forecast outcomes before symptoms appear. Over time, these predictions influence behavior, redefining “normal” based on the model—raising the key question: who controls the system guiding your decisions?

How Smart Homes Collect Data and Why Your Home Isn’t Private

Smart home devices track voice, motion, and behavior to build detailed patterns of life. Learn how Alexa, Ring, and connected systems collect and use your data, and how hosting local-first models on personal devices can help you keep it private.Who Owns Your Wearable Data? The Reality Behind Health Tracking

You generate wearable data, but you don’t truly control it. Platforms like Apple, Google, and Fitbit store and process it in centralized systems, where access doesn’t equal control. Data becomes tied to proprietary ecosystems and often falls outside protections like HIPAA. Real control depends on where the data lives—not who “owns” it.

When Your Body Becomes Data: How Wearables Track Health and Who Controls It

Wearables track biological signals to build a baseline of how your body functions, making health data predictive. Over time, this data can leave your control, be used externally (even as evidence), and shape decisions about you. A local-first model keeps data private, turning tracking into personal insight instead of externalized risk.

Smart Home Intelligence

Smart homes don’t just automate—they observe. Devices track behavior to build a “pattern-of-life” model that predicts routines. When this data leaves the home (e.g., via Amazon Alexa or Ring), it becomes persistent and external. Local-first systems keep data inside, turning insight into private awareness instead of extraction.

When Social Networks Become Predictive Systems

Series: Seeing the Social Program (4/4)

Social platforms model relationships as networks, using graph data to predict group behavior and influence information flow. Through ranking and targeting, they are able to shape environments and norms indirectly. Companion Memory keeps your social data local, prioritizing user awareness over external modeling.

How Algorithms Learn Your Emotions (And Use Them)

Series: Seeing the Social Program (3/4)

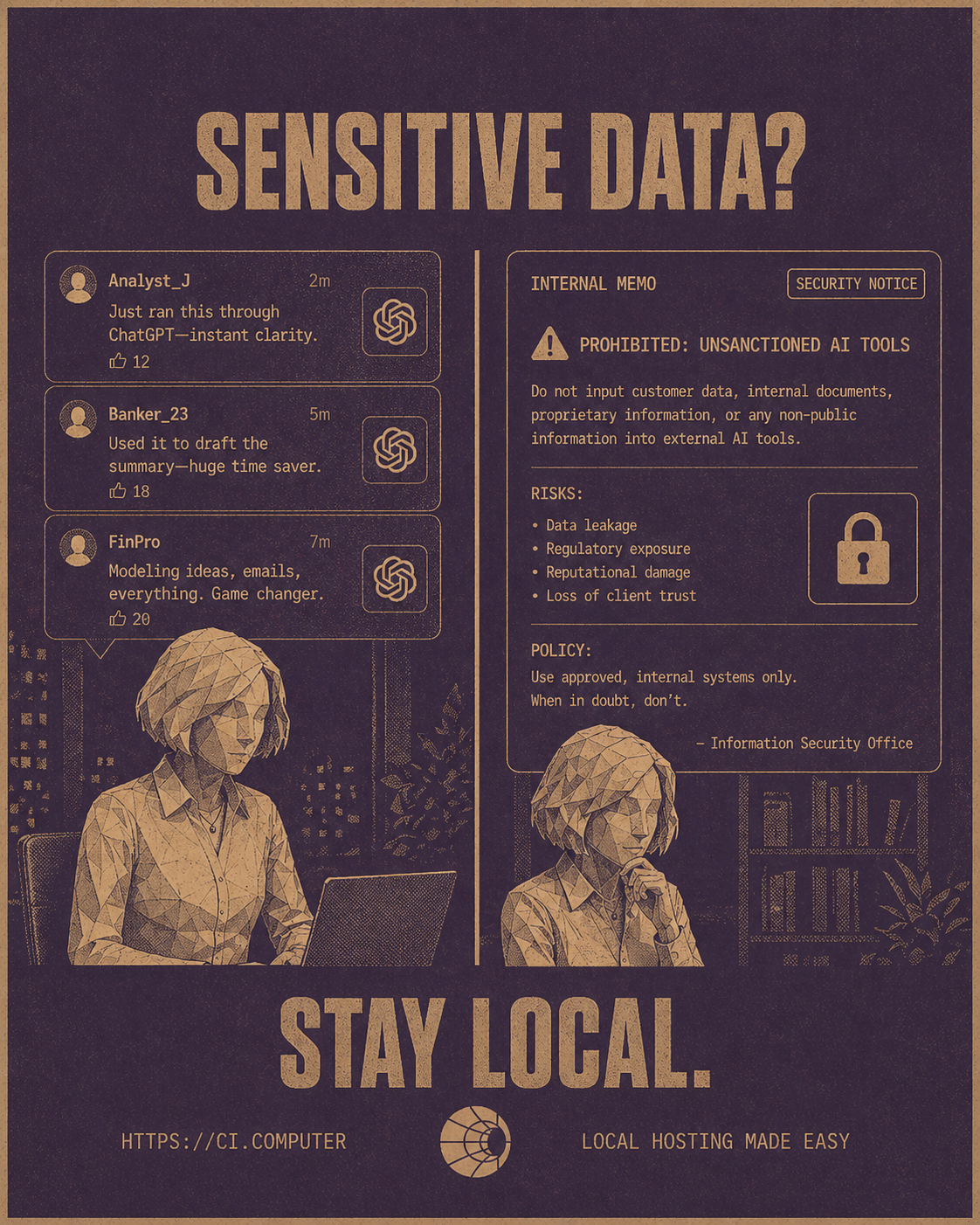

Platforms infer emotional states using behavior signals like language, timing, and engagement. These signals power feedback loops that shape content exposure and reinforce responses. How can we escape it? Or learn from it? Companion Intelligence offers a local-first model, that collects public context to create private and user-controlled insight.AI in Finance Is Powerful, But It Can Expose What Matters Most

Financial firms are restricting AI tools for a reason. Real cases show how everyday workflows can expose sensitive data—and why control is becoming the priority.Your Messages Aren’t Just Messages — They’re Behavioral Data

Series: Seeing the Social Program (2/4)

Messages generate metadata like timing, frequency, and patterns, that platforms use to model relationships and behavior. These systems influence visibility and interaction indirectly. Companion Intelligence proposes a local-first alternative, keeping relationship data private and user-controlled

How Social Data Shapes Your Relationships, Emotions, and Reality

Seeing the Social Program (1/4)

Social platforms turn your interactions into data—mapping relationships, inferring emotional responses, and shaping the information you see. Over time, this creates environments that influence behavior indirectly.

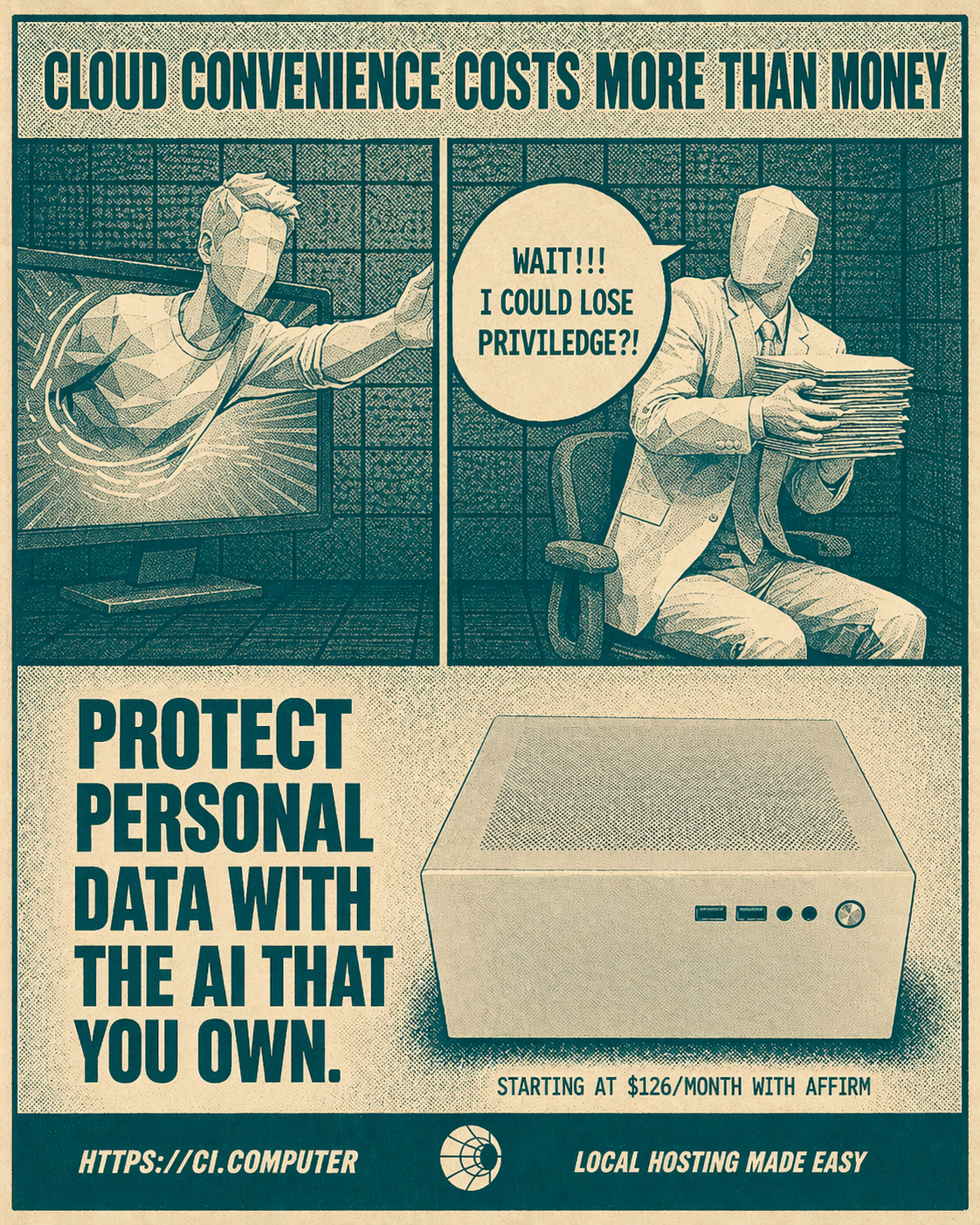

Legal Data Deserves Local Control

Cloud tools expose legal data to risk. Learn why local-first AI systems give lawyers and clients control, privacy, and true data sovereignty.Legal Data in the Cloud: How Lawyers Accidentally Waive Attorney-Client Privilege Using Everyday Tools

Modern legal workflows rely heavily on cloud storage, shared links, and AI tools, but these systems are designed for collaboration, not confidentiality. This article explains how legal data exposure happens without breaches, why privilege loss is irreversible, and how lawyers and professionals can reduce risk by rethinking where and how sensitive information is stored and processed.

Writing a Blog Pre and Post OpenClaw

Start thinking about automation not as a replacement, but as an assistant.

Unclouding Personal AI Agents

AI is evolving from simple tools into persistent systems that learn, act, and improve over time. Discover how personal AI agents are reshaping work, the risks of delegated cognition, and why data control matters more than ever.

Stewardship Systems: Building Ethical & Sustainable AI Operations

As Machine Learning becomes woven into every layer of infrastructure, responsible technologists are shifting from speed and scale to stewardship. Designing systems that conserve energy, respect privacy, and remain auditable is the foundation of sustainable intelligence.

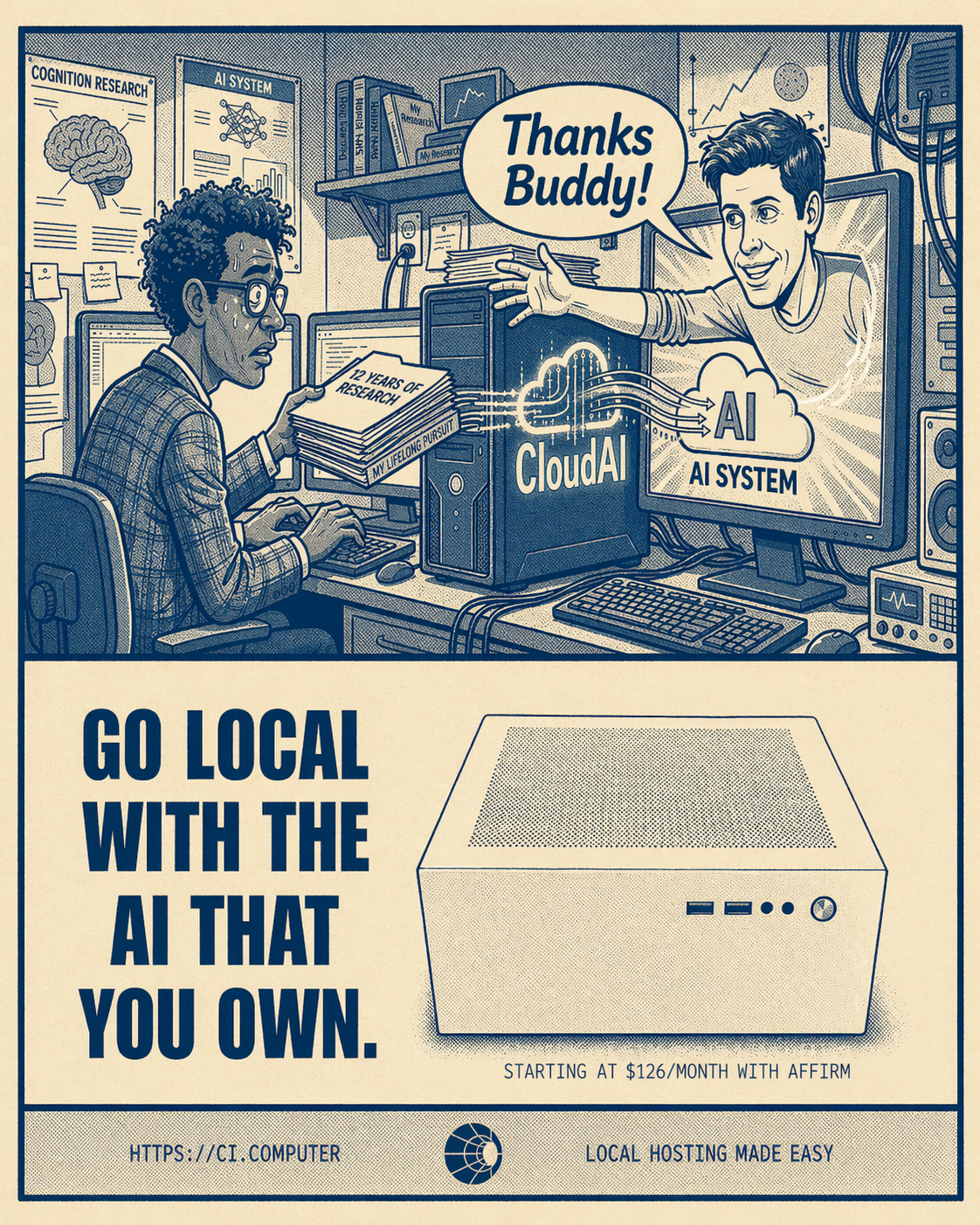

The Mirage of the Cloud: The Costs of Convenience

Cloud AI feels limitless—until you examine what it really costs in money, energy, and autonomy. This post helps readers uncover the hidden dependencies behind cloud computing and introduces practical methods for measuring their true “cloud tax.”