Unclouding Personal AI Agents

The Machine That Stays On

As of September 2025, AI use has become routine for many Americans, with 62% of U.S. adults reporting they interact with AI at least several times per week, according to Pew Research Center.

This level of frequency signals widespread behavioral adoption, but not necessarily deep integration. Other studies show that while usage is common, it is often episodic, task-specific, or even unrecognized by users .

Most people still use AI like a vending machine: insert a prompt, receive an output, and move on. Nothing carries forward, nothing compounds, and nothing learns who you are or how you work.

Personal AI agents are different. They behave less like vending machines and more like workshop machines that stay running overnight, remembering the last cut, adjusting the next one, and slowly shaping the work alongside you.

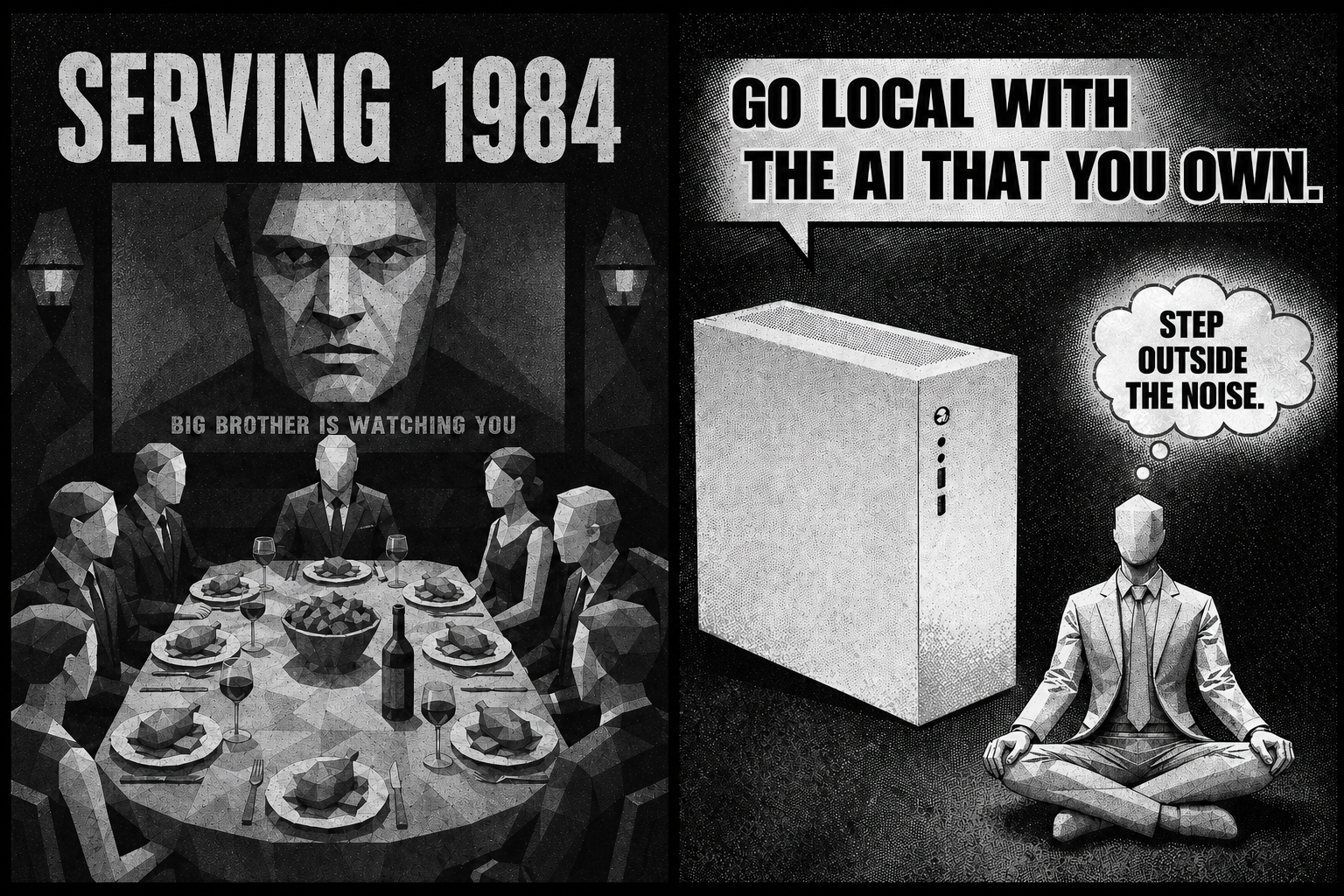

If it sounds too good to be true, it usually is. Systems that promise effort without process always come with terms.

Consider the old tale of The Elves and the Shoemaker. A cobbler, down to his last materials, wakes to find perfect work completed overnight. He sells them immediately, making enough profit to buy leather for two more pairs. This continued, until the cobbler discovered the source of his magical solution late one night.

The story branches from there. It is often told as kindness rewarded. But some versions spell a tale of a gift that at first feels like relief. Then like leverage. Then like dependence. A warning: when outcomes arrive without process, the source of value becomes invisible and control quietly shifts.

Reality Check

If the most leveraged operators are already working at the level of inference, everyone else is already behind.

There was a time when a security breach, like the ones seen in systems such as OpenClaw, would have ended a project before it ever found its footing. Trust was fragile. One failure was enough to collapse adoption.

Today, the landscape looks very different. Vulnerabilities, data leaks, and unstable behaviors are not rare events. They are ambient. They exist across systems, platforms, and workflows, often accepted as the cost of moving fast.

And yet, adoption accelerates.

Not because the systems are fully trusted, but because the upside compounds faster than the risks can be resolved. The people who benefit most are not waiting for stability. They are building advantage in motion, learning how to operate inside imperfect systems while others hesitate at the boundary.

That creates a widening gap.

Those who treat AI as a tool remain bound to prompts and outputs. Those who treat it as a system begin to operate at the level of inference, delegation, and accumulation. One group works request by request. The other builds momentum.

So the question isn’t whether others can catch up. It’s whether they’re willing to change how they work.

Because keeping up doesn’t come from using the same tools. It comes from adopting the same model of operation: building systems that remember, act, and improve over time, while developing the judgment to guide them.

The risk is real. The systems are imperfect. But the asymmetry is already here.

From Tools to Systems

Most AI tools today are transactional, meaning you ask a question, receive an answer, and the interaction resets. Even advanced systems summarize documents, draft emails, and generate ideas without retaining meaningful continuity.

Personal AI introduces a structural shift by creating continuity across time. Systems like OpenClaw and Hermes move beyond chat interfaces by connecting to your tools, retaining context, and acting with permission.

This is the shift most people miss: AI is moving from something you visit to something that works alongside you.

The Personal AI Stack

Interface Layer: Assistants

Tools like ChatGPT and Google Gemini sit at the surface and are optimized for speed, flexibility, and conversation. They help with thinking and writing but remain largely session-based, meaning they do not persist deeply across time.

Execution Layer: Orchestrators

OpenClaw represents orchestration by connecting across apps, APIs, and workflows to execute multi-step tasks automatically. Users deploy it for scheduling, research, and operations, and in some cases for more complex workflows like trading or logistics. Its strength comes from action, but its consistency depends on how well the system is structured around it.

Learning Layer: Adaptive Agents

Hermes represents a different approach by learning from outcomes and refining behavior over time. It builds reusable skills from repeated actions, introducing procedural memory that reflects not just what to do but how to do it more effectively with each iteration.

Real-World Use Cases

In construction operations, personal AI agents track permits, monitor project timelines, and flag subcontractor delays before they escalate. One firm reported saving nearly 45 minutes each morning, but the more important gain was fewer missed decisions and improved operational awareness.

In e-commerce, agents manage inventory updates and customer responses, reducing response time while maintaining consistency across interactions. In research workflows, agents gather, summarize, and organize information into structured outputs that would otherwise require hours of manual effort.

Across emerging studies of agent systems, usage is shifting from passive assistance toward direct action, including sending emails, editing files, and executing tasks autonomously. The pattern is clear: AI is moving from advising to doing.

The Opportunity: Delegated Cognition

Personal AI compresses effort by turning multi-step workflows into single instructions while preserving context across time. This reduces repetition and allows work to compound rather than reset.

Over time, these systems begin to approximate judgment by recognizing patterns in decisions and outcomes. This is not just automation but delegated cognition, where parts of the thinking process are handled by the system itself.

Like a workshop machine refining each pass, the system improves with use, producing better results as it learns how you work.

The Challenge: Thin Authorship

Traditional skill development relied on repetition, correction, and gradual refinement of judgment. Personal AI agents can shortcut that process by producing outcomes without requiring the same level of human formation.

This introduces a risk of thin authorship, where outputs are technically correct but lack depth of understanding. You may still own the result, but your connection to how it was formed becomes weaker over time.

As the system improves, the operator may not, which creates a subtle but important imbalance.

Security and Control Risks

Personal AI systems require access to function, often connecting to email, calendars, files, and messaging platforms. This level of access introduces real exposure, especially if permissions are granted too broadly or too quickly.

Agent-based systems have already demonstrated vulnerabilities in real-world scenarios, including unsafe execution paths and excessive permissions that could be exploited if compromised. Even without malicious intent, misconfigurations can lead to unintended actions or data leaks.

Local systems reduce reliance on external infrastructure but introduce their own risks, including device security and physical access. The threat model changes, but it does not disappear.

Cloud, Local, Hybrid

Every personal AI system ultimately answers one question: where does your thinking live. Cloud-based systems offer speed and access to powerful models but require data to move through external infrastructure. Local systems provide greater control by keeping processing on your device but require more setup and maintenance.

Most real-world implementations become hybrid systems, where sensitive tasks remain local and more complex processing is handled in the cloud. This approach balances capability and control but requires clear boundaries and intentional design.

A Practical Classification Model

Before automating any workflow, it is critical to classify the data involved. Highly sensitive information such as legal, financial, or medical data should remain local, while business-sensitive data can operate within hybrid systems with appropriate approvals.

Low-sensitivity tasks like scheduling or general research can safely use cloud-based processing. When uncertainty exists, it is safer to treat data as sensitive until proven otherwise. This simple discipline prevents most early-stage mistakes.

How Systems Actually Evolve

Personal AI adoption follows a predictable progression from reactive to proactive behavior. Initially, systems respond to direct commands, then move into scheduled automation where tasks run at defined intervals.

Over time, they become proactive by monitoring conditions and surfacing relevant insights before the user asks. This evolution does not happen instantly, as the system must learn patterns and preferences through repeated interaction.

What to Measure Early

Early success with personal AI should be measured through outcomes rather than features. Tracking time saved, missed follow-ups caught, and the ratio of drafted versus manually created work provides a clearer picture of value.

Monitoring error rates and required corrections helps define trust boundaries and ensures that automation remains controlled rather than unchecked.

Common Failure Modes

Most failures occur when systems are expanded too quickly, with users granting broad permissions before establishing trust. Many assume that “private” settings guarantee security, while ignoring data retention and processing policies.

Others fail to define boundaries in hybrid systems or assume that local deployment eliminates risk entirely. In reality, each decision introduces tradeoffs that must be managed intentionally.

A Better Way to Start

A more effective approach begins with observation rather than implementation. Tracking one week of work reveals patterns that can be safely automated, particularly repetitive tasks with low sensitivity.

Starting small, with limited permissions and required approvals, allows the system to demonstrate value before expanding its scope. Like any machine, performance improves when adjustments are made gradually.

Why This Matters Now

AI is no longer just a tool category; it is becoming an operational layer that reshapes how work is structured. Today, most workflows are fragmented across apps and sessions that do not share context.

Personal AI consolidates that context into systems capable of recognizing patterns and acting on them over time. The shift is already underway, and the question is not whether it will happen but who controls it.

What Comes Next

The next boundary is data movement, including where information goes, how long it is retained, and who has access to it. Once AI becomes a system rather than a tool, privacy is no longer a setting but an architectural decision that defines the entire experience.